Monica, a special education teacher, had been working with her school’s student support team (SST) monitoring the progress of a first-grade student, Sam, as he moved through the school’s response-to-intervention (RTI) process.

In Tier 1, Sam received 90 minutes of reading instruction per day in the general education classroom. After monitoring his progress weekly, it was determined that Sam was falling behind his classmates. He was then moved to Tier 2, where he received an additional 20 minutes of reading instruction daily. Although Sam showed some improvement over the next 8 weeks, he was still well below what was expected in first grade. Sam’s performance on multiple measures (i.e., initial sound fluency, phoneme segmentation fluency, oral reading fluency) fell in the high-risk category.

Therefore, it was decided to provide Sam with Tier 3 interventions and progress monitoring in addition to the interventions and instruction he was receiving in Tiers 1 and 2. Sam’s mother was updated on Sam’s progress every few weeks, but she was becoming increasingly concerned. She remembers having a difficult time learning to read when she was a child, and she does not want her son to struggle like she did.

Sam’s mother decided to contact Monica and ask if the school would evaluate him for dyslexia. Monica knew that the official eligibility category under IDEA was Specific Learning Disability (SLD), but she was not sure if she was allowed to use the term dyslexia or if the process of identification was somehow different for dyslexia.

Introduction

There is often confusion about the terms used to label or describe a reading problem. Clinicians and researchers use different terminology than the schools. For example, medical professionals, psychologists, and other practitioners outside of the school often use the term dyslexia, reading disorder, and specific learning disorder. Schools and educators use the terms reading difficulty and specific learning disability in reading. The preferred terms in a field can change over time, further complicating the issue (e.g., changes in the Diagnostic and Statistical Manual of Mental Disorders; Snowling & Hulme, 2012).

The language used in schools comes from federal and state educational laws. Laws define the criteria under which students have a guaranteed right to services. For example, students who struggle with reading may receive services under IDEA (2006), or they may receive support through a 504 plan (U.S. Department of Education, Office for Civil Rights, 2016). Compliance with these laws and the mission to educate all students drive schools’ decision making; in other words, a school’s primary focus is on determining the need for specialized instruction, accommodations, and modifications.

Confusion regarding terminology is also commonly coupled with confusion regarding identification procedures. Specifically, although many teachers and parents possess a general understanding of RTI, “a process that determines if the child responds to scientific, research-based intervention” for the purpose of identifying students with a specific learning disability (SLD; IDEA, 2006), many school-based personnel, such as Monica, are unclear about the relation between processes and related assessments used for SLD identification and those used for the identification of dyslexia (Tucker, 2015).

To clarify this confusion, the Office of Special Education and Rehabilitative Services (2015) issued a Dear Colleague letter that stated, “The purpose of this letter is to clarify that there is nothing in the IDEA that would prohibit the use of the terms dyslexia, dyscalculia, and dysgraphia in IDEA evaluation, eligibility determinations, or [individualized education program] documents.”

Greater understanding of the law and of specific assessments that can be used for the identification of dyslexia can help school personnel understand the connection between the criteria for SLD eligibility under IDEA and dyslexia.

Dyslexia and special education eligibility

Monica sought clarification from her school’s special education coordinator, who explained that although dyslexia is not a disability category under IDEA (2006), students with dyslexia can receive services under the SLD category.

In order for any student to be eligible for services under IDEA (2006), the student must (a) be identified as having a disability that falls under one of IDEA’s categories of disability and (b) have a demonstrated educational need. In other words, if a student has an identified disability, such as dyslexia, but is making appropriate educational gains according to school-based norms or expectations, that student may not qualify for special education services.

For students with dyslexia, in order to be eligible under the category of SLD, RTI or other educational data may be used to demonstrate that the disability has a significant educational impact (Mather & Wendling, 2011). Therefore, some students who have been identified with dyslexia may meet state-determined criteria for the special education category of SLD, whereas others may not.

Under IDEA and its implementing regulations, SLD is defined, in part, as:

A disorder in one or more of the basic psychological processes involved in understanding or in using language, spoken or written, that may manifest itself in the imperfect ability to listen, think, speak, read, write, spell, or to do mathematical calculations, including conditions such as perceptual disabilities, brain injury, minimal brain dysfunction, dyslexia [italics added], and developmental aphasia. (20 U.S.C. §1401[30]; 34 C.F.R. §300.8[c][10]

Although the regulations contain a list of conditions under the definition of SLD that includes dyslexia, the list is not exhaustive. Whereas the majority of students with SLD have reading difficulties (~75% to 80%; Learning Disabilities Association of America, n.d.), many also have difficulties in writing (dysgraphia), mathematics (dyscalculia), organization, focus, listening comprehension, or a combination of these.

Characteristics of Dyslexia

Dyslexia is a reading disorder in which the core problem involves decoding and spelling printed words that is not due to low intelligence or inadequate instruction (Hudson, High, & Al Otaiba, 2007). These weaknesses often result in difficulty with comprehending written material.

Although dyslexia is the most common type of reading disability, other types of reading disorders have been reported. For example, it is estimated that 3% to 10% of school-age children demonstrate adequate word-level abilities (word recognition and decoding) but nevertheless struggle with comprehension of written text (Cain & Oakhill, 2006, 2011; Leach, Scarborough, & Rescorla, 2003; Nation, 2001).

These students may demonstrate weaknesses in broader language abilities, such as semantics, syntax, inference making, self-monitoring, and executive function (Cain & Oakhill, 2011; Cain & Towse, 2008; Cutting, Materek, Cole, Levine, & Mahone 2009; Locascio, Mahone, Eason, & Cutting, 2010).

Some states have passed specific legislation related to dyslexia (see Dyslegia.com(opens in a new window) for an up-to-date list); others are attempting to pass legislation. Many of these laws require public schools to screen children for dyslexia during kindergarten, first grade, or second grade. A few of the states require teacher preparation programs to offer courses on dyslexia and for teachers to have in-service training (Youman & Mather, 2015). As of 2015, 14 states provided specific dyslexia handbooks for educators, parents, and legislators with clarification on newly passed dyslexia legislation and roles and responsibilities of state and local education agencies (e.g., Tennessee Department of Education, 2017).

The Eligibility Process

Sam continued to struggle despite increasingly intensive and targeted interventions; therefore, recommendations were made and implemented based on the team’s review of his performance and instructional history. Monica and other members of the support team, along with Sam’s mother, were ready to consider whether a comprehensive evaluation should be conducted to determine if Sam has a disability and is potentially eligible for special education services.

To determine eligibility under the SLD category, current federal law allows eligibility determinations to be made in several ways or through use of a combination of methods: a discrepancy between the individual’s ability (usually based on an IQ score) and achievement (usually based on scores from an individually administered, norm-referenced test of achievement), a pattern of strengths and weaknesses (among an individual’s cognitive and achievement scores) that suggests the presence of a SLD, or failure to respond to scientifically-based instruction (in schools using an RTI model; Mather & Wendling, 2011).

Before a student is referred for a comprehensive evaluation, most schools try to intervene with additional support for the student (Arden & Pentimonti, 2017). If concerns continue, a teacher may turn to a student support team for help. These teams have many different names, such as pupil services team, student assistance teams, teacher assistance teams, or instructional support teams. The teams are composed of teachers and other professionals (e.g., school psychologist, speech-language pathologist) and may include the principal and relevant specialists.

Many schools implement a multitiered-system-of-supports model, such as RTI. Although there is not one standard way to implement RTI, typically three or four tiers are provided with increasing levels of intervention (Arden & Pentimonti, 2017).

RTI models provide early interventions that may help students overcome academic difficulties or help educators pinpoint areas of persistent need. Using it exclusively as an identification model (as of 2012, at least 12 states mandated RTI, at least in part, for identification of SLD; Zirkel, n.d.), however, could bring negative consequences to struggling learners. Low reading performance alone, which may be evident as students move through tiers of instruction, is insufficient for the identification of dyslexia (Berninger & May, 2011).

The comprehensive evaluation

Through the RTI process, Sam’s teachers were able to document lack of adequate progress, but they did not have enough information to understand the cause of his difficulties.

Although schools do not commonly use the term dyslexia, it is important for school personnel to understand the specific areas that can be affected by dyslexia. School personnel can then identify appropriate measures to use in an evaluation and link data from the evaluation to the development of an individualized education program (IEP). One approach to eligibility determination is a process put forth by Flanagan and colleagues (Flanagan, Ortiz, Alfonso, & Dynda, 2006a; Flanagan, Ortiz, Alfonso, & Mascolo, 2002, 2006).

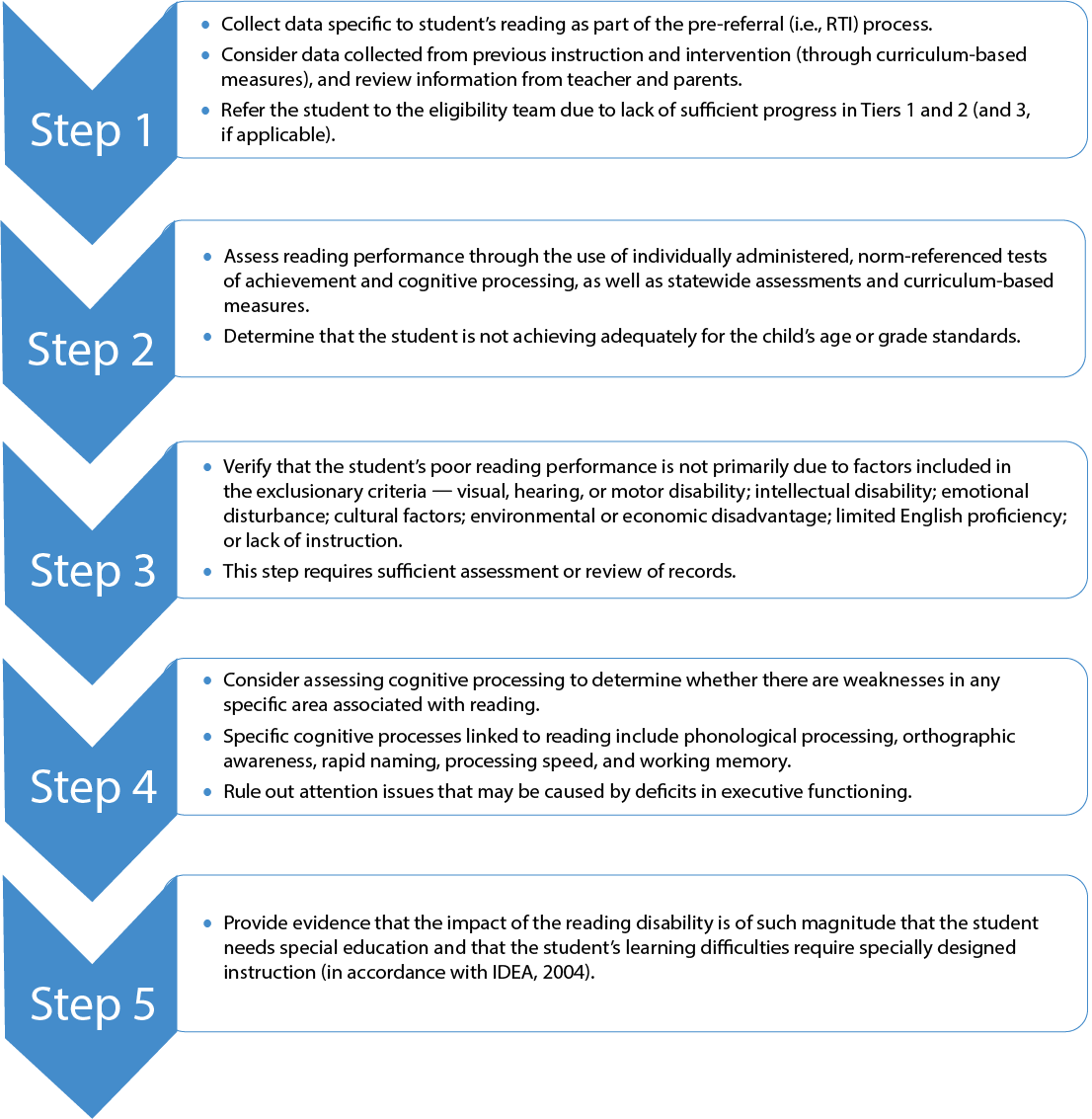

Figure 1 shows how Monica, along with other members of the eligibility team, might proceed through the eligibility process with Sam. The process, as outlined in Figure 1 (adapted from Flanagan et al., 2002; Flanagan, Ortiz, Alfonso, & Mascolo, 2006), is designed to address many of the SLD eligibility criteria.

Figure 1. Framework for Eligibility as a Student with a Reading Disability

Assessing Reading Skills

The first and second steps in Figure 1 pertain to demonstrating evidence of low achievement in reading. In thinking about Sam, Monica and the eligibility team would first consider data specific to Sam’s reading performance that were collected as part of the prereferral (i.e., RTI) process.

How did Sam perform on curriculum-based measures of letter-naming fluency, letter–sound fluency, oral reading fluency, nonsense word reading, and spelling? In first grade (or prior), has Sam shown any signs of oral language deficits (i.e., slow rate of vocabulary acquisition, word-finding difficulties, difficulties rhyming, frequent grammatical errors when speaking)?

If the answer is yes to one or more of these questions, the team would proceed to Step 2, which involves formal assessment of Sam’s reading skills to determine whether he is not achieving adequately for his age or grade-level standards.

Table 1 provides a list of reading-related skill areas that one would expect to see deficits in students with dyslexia, specific assessments that may be used, and examples of how the skills are assessed.

Table 1: Assessing Reading Performance

| Relevant reading area | Specific assessments that may be used | Examples of tasks |

|---|---|---|

| Letter-sound knowledge |

|

|

| Word decoding |

|

|

| Reading fluency |

|

|

| Spelling (encoding) |

|

|

| Reading comprehension |

|

|

* Text fluency

** Single-word fluency

Letter–sound knowledge

Letter–sound knowledge refers to the student’s familiarity with letter forms, names, and corresponding sounds, which may be measured by recognition, production, and writing tasks. To measure letter–name fluency, the student may be given a random list of uppercase and lowercase letters and asked to identify the names of as many letters as possible in 1 minute.

Similarly, on tests of letter-sound fluency, the student may be given a random list of uppercase and lowercase letters and have 1 minute to identify as many letter sounds as possible. A student may also be asked to write individual letters that are dictated or write the letter or letter combination that corresponds to a sound that is presented orally (e.g., “Write the letter that makes the /m/ sound”).

Word reading

It is also critical to assess the student’s word-reading skills, which requires assessing accuracy and fluency with both real and nonsense words in timed and untimed situations. Timed tests of real and nonsense word reading provide information as to whether the student has fluency in word identification.

Untimed tests of real and nonsense word reading provide information as to whether the student has requisite word-reading accuracy. Untimed tests of nonword or nonsense word reading assess knowledge about the letter–sound correspondences of English (phonics).

Fluency

When assessing text fluency, both oral and silent reading fluency can be evaluated. Tests that are commonly used to assess fluency (see Table 1) tend to measure reading rate more specifically. Reading rate comprises both word-level automaticity and the speed and fluidity with which a reader moves through connected text (Hudson, Lane, & Pullen, 2005).

Automaticity is quick and effortless identification of words in or out of context (Ehri & McCormick, 1998; Kuhn & Stahl, 2000).

Measuring reading rate should encompass consideration of both single-word reading automaticity (e.g., Test of Word Reading Efficiency, 2nd ed.; Torgeson, Rashotte, & Wagner, 2012) and reading speed in connected text (e.g., Woodcock-Johnson IV Tests of Achievement Sentence Reading Fluency; Schrank, Mather, & McGrew, 2014a).

Assessment of automaticity can include tests of sight-word knowledge or tests of decoding rate. Tests of decoding rate often consist of rapid decoding of nonwords. Measurement of nonword reading rate ensures that the construct being assessed is the student’s ability to automatically decode words using letter–sound knowledge (Hudson et al., 2005) as opposed to rapid recognition of real words that the student has memorized.

Spelling

Spelling tests can provide information about a student’s understanding of and ability to apply phonics to the spelling of words and of a student’s orthographic and morphological awareness (Berninger, 2007).

Traditional spelling tests can be examined to determine whether a student uses correct or incorrect letter combinations and whether the student’s spellings reflect knowledge of conventions, such as le endings. For example, the student who spells bell as ble is beginning to notice graphemic conventions. The student who puts odd letter combinations together, such as kpz, does not have a strong sense of English orthography.

Spelling tests also provide information about a student’s morphological awareness. For example, the student who spells lived as livt does not have knowledge of the -ed convention for past tense (Berninger, 2007).

Most achievement tests contain these traditional spelling tests.

Tests that ask students to spell nonsense words are less common but are useful in assessing a student’s knowledge of phonics (e.g., Woodcock-Johnson Tests of Achievement IV Spelling of Sounds; Schrank et al., 2014a).

Of course, a student’s response to traditional spelling tests will also provide information about phonics knowledge. Words that are spelled incorrectly but phonetically suggest that the student is developing mastery of phonics but has not yet created accessible orthographic representations (i.e., visual images). For instance, common error patterns on Sam’s weekly spelling tests included wunts for once, thot for thought, and bin for been.

Comprehension

Reading comprehension tests can vary along many dimensions, including mode of administration. Measures of reading comprehension can be individually administered (i.e., as part of a comprehensive evaluation to determine eligibility for special education services) or group administered, such as state-mandated assessments of reading.

Comprehension measures also vary in the type of text students are expected to read (e.g., narrative, informational, or persuasive material), time constraints and pressure for speed, whether or not students can refer back to the text in answering comprehension questions, and response format or how students are expected to demonstrate comprehension of what they have read. It is important to note that students may perform differently depending on the mode of administration, type of text, and format of the test.

Three response formats are especially common: cloze, question answering, and retellings.

Cloze format tests (e.g., Woodcock-Johnson IV Tests of Achievement Passage Comprehension; Schrank et al., 2014a) present sentences or passages with blanks in them (e.g., “The birds were flying in the ”); the student is expected to read the text and provide an appropriate word to go in the blank (for the previous example, a word such as sky or air).

In tests with a question–answer format, the student reads passages and answers questions about them; the questions may involve multiple-choice or open-ended items and may be answered orally (e.g., Wechsler Individual Achievement Test, 3rd ed.; Wechsler, 2009) or in writing.

Retellings require a student to read a text and then orally tell about what was just read, usually with some sort of coding system for scoring the quality of the retelling.

Reading comprehension tests that use a multiple-choice format require the student to answer questions based on a passage the student just read. One of the concerns with this format involves passage independence, that is, the likelihood that on some items, a student could respond correctly (based on prior knowledge or educated guessing) without having read the accompanying passage (e.g., “What colors were on the American flag?”; Keenan & Betjemann, 2006).

Despite decades of ongoing attempts by researchers to alert test developers to passage independence and its consequences, studies have consistently uncovered passage-independent items on standardized reading comprehension measures, including the Minnesota Scholastic Aptitude Test (Fowler & Kroll, 1978), Stanford Achievement Test (Lifson, Scruggs, & Bennion, 1984), SAT (e.g., Daneman & Hannon, 2001; Katz, Lautenschlager, Blackburn, & Harris, 1990), Test of English as a Foreign Language (Tian, 2006), Nelson-Denny Reading Test (Coleman, Lindstrom, Nelson, Lindstrom, & Gregg, 2009), and Gray Oral Reading Test (Keenan & Betjemann, 2006).

Consideration of Exclusionary Criteria

At the next level (Figure 1, Step 3), the eligibility team must determine that a deficit is not primarily due to factors included in the exclusionary criteria: visual, hearing, or motor disability; intellectual disability; emotional disturbance; cultural factors; environmental or economic disadvantage; limited English proficiency; or lack of instruction (IDEA, 2006).

Pre-referral information generated through a multitiered system of supports (such as RTI models) is useful in addressing this criterion. Within such a model, it is presumed that information on vision, hearing, and the impact of cultural or linguistic and other noncognitive factors are considered as part of providing interventions. Because RTI models also emphasize appropriate interventions and monitoring of student progress, they provide information as to the adequacy of the instruction received. This step requires sufficient assessment or review of records, interviews with parents and former teachers, and direct observation of the student.

Assessing Cognitive Processes

As noted previously, dyslexia is not a disability category under IDEA (2006), but students with dyslexia can be found eligible for services under the SLD category. Because the operational definition of SLD is defined, in part, as “a disorder in one or more of the basic psychological processes involved in understanding or in using language, spoken or written” (20 U.S.C. §1401[30] and 34 C.F.R. §300.8[c][10]),

Step 4 (Figure 1) suggests the consideration of assessing cognitive processes. It is important to point out, however, that the emphasis is not on generating a global IQ score but instead on identifying a pattern of performance across cognitive areas assessed — although a consistently low profile may suggest the need for cognitive assessment to rule out the presence of an intellectual disability.

Whereas the general and special education teachers play crucial roles in Steps 1 through 3, typically, the school psychologist or speech-language pathologist assesses specific areas of cognitive processing to determine whether there is a weakness in any specific area and if that area is associated with reading (see Figure 1).

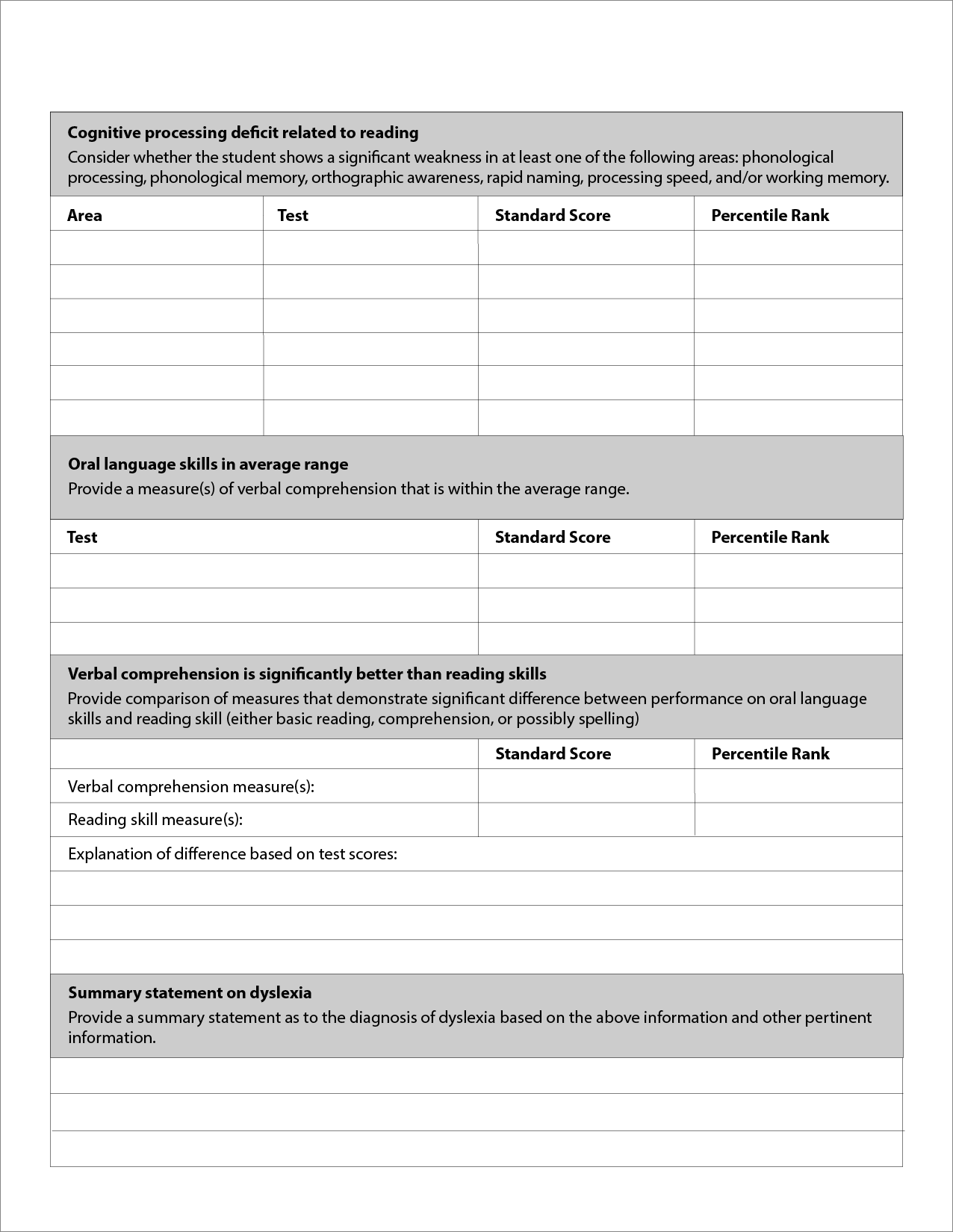

Specific cognitive processes linked to reading include phonological processing, orthographic awareness, rapid naming, processing speed, and working memory (see Table 2).

The eligibility team may also want to assess executive functioning (the mental processes that enable students to plan, focus attention, remember instructions, and juggle multiple tasks successfully) due to the high comorbidity (~45%) of SLD and attention deficit hyperactivity disorder (ADHD; DuPaul, Gormley, & Laracy, 2013).

Phonological awareness is the awareness of and access to the sound structure of oral language. It is assessed through tasks that require the student to manipulate the sounds in words (e.g., Comprehensive Test of Phonological Processing, 2nd ed.; Torgeson, Rashotte, & Pearson, 2013; Woodcock-Johnson IV Tests of Oral Language; Schrank, Mather, & McGrew, 2014b).

Orthographic awareness, or the ability to form, store, and access orthographic representations (Stanovich & West, 1989), can be assessed through tasks such as orthographic choice (e.g., “Circle the correctly spelled word: rume–room, snow–snoe, wrote–wroat”), homophone choice (e.g., “Which is a fruit? pair–pear”), and word scramble tasks (e.g., bdir–bird).

Rapid naming is evaluated through tests that require the student to quickly name letters, numbers, colors, or objects.

Working memory, which is essential for students when decoding words — a student must be able to connect letters with the correct sounds, put them together to form a word, keep that word in mind while reading the next word, string all those words together to form a sentence, and then figure out the meaning of all those words — is assessed through retrieval fluency and sequencing tasks (see Table 2).

Students with dyslexia will usually have relatively circumscribed weaknesses in areas such as phonological processing, but their broad oral language comprehension will typically be in the average range or higher.

Step 4, as it relates to the operational definition of SLD, addresses the deficit in a basic psychological process that is part of the definition of SLD and goes further in seeking to determine whether the area of cognitive weakness may be causally related to the area of academic weakness (e.g., reading).

Likewise, it would be expected that the student would not show deficits in areas of cognitive functioning that are not related to the area of low achievement (e.g., spatial relations).

Table 2: Assessing Cognitive Processes

| Relevant cognitive areas | Specific assessments that may be used | Examples of tasks |

|---|---|---|

| Phonological awareness |

|

|

| Phonological memory |

|

|

| Orthographic awareness |

|

|

| Rapid naming |

|

|

| Processing speed |

|

|

| Working memory |

|

|

Evidence of Substantial Impact

To proceed to Step 5, the eligibility team would likely have determined that two criteria were met in Step 4: (a) identification of a deficit in at least one area of cognitive ability or processing and (b) identification of an empirical or logical link between low functioning in an identified area of cognitive ability or processing and a corresponding weakness in academic performance (Flanagan, Ortiz, Alfonso, & Mascolo, 2006).

In Sam’s case, the team would expect scores at or below the 25th percentile on measures of letter-sound knowledge, decoding, reading fluency, or spelling. Similarly, the team would also expect him to score at or below the 25th percentile on phonological awareness, rapid naming, and working memory measures that would likely be causing the “substantial impact” on Sam’s reading-related skills.

The final step requires evidence of substantial impact of the SLD. In addition, in order for a student to be eligible for services under IDEA, the eligibility team must determine that the student’s learning difficulties require specially designed instruction. These criteria act as a safety net for determining the need for special education as identified in IDEA (2006) as one purpose of the comprehensive evaluation.

Thus, it is possible (though unlikely) that a student may have a SLD as identified through this operational definition but would not require specialized instruction due to adequate performance in the classroom. In such an instance, the child would not meet criteria for special education services.

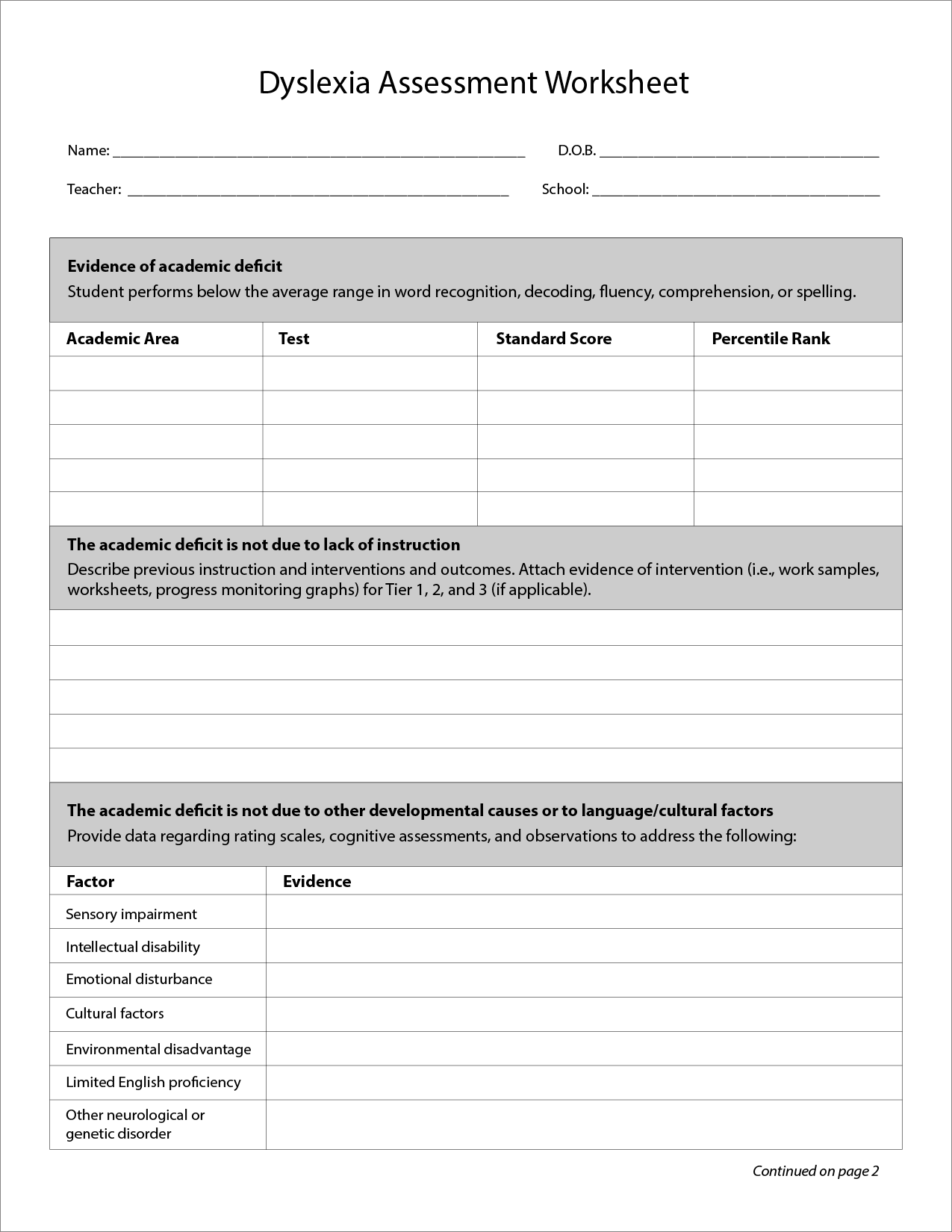

Sam’s eligibility team answered yes to all of these questions and thus determined that Sam does meet eligibility criteria for SLD. Figure 2 is an example of the Dyslexia Assessment Worksheet that the eligibility team could use in considering this determination.

In summary, when evaluating a student, the eligibility team should be able to answer yes to the following:

- Does the student perform significantly below peers on measures of letter-sound knowledge, word decoding, reading fluency, and/or spelling?

- Has the student had sufficient instruction?

- Has it been determined that the difficulties identified earlier are not due to another factor, such as intellectual disability, ADHD, or emotional disturbance?

- Does the student have a deficit in phonological processing, phonological memory, orthographic awareness, rapid naming, processing speed, or working memory?

- Does the student have broad oral language abilities within the average range?

Figure 2: Dyslexia Assessment Worksheet

Download a printable version of the Dyslexia Assessment Worksheet ›

Conclusion

Schools and teachers play an essential role in identifying students with reading difficulties, including dyslexia, and are responsible for teaching them to read. It is well understood that high-quality instruction can prevent some reading problems and reduce the impact of more-severe reading difficulties (Mather & Wendling, 2011).

The challenge is to ensure that teachers understand how to identify reading difficulties early, use data collected through the assessment process to make eligibility decisions, and link data to the development of the IEP.

Reading is a gateway skill — the ability to read is fundamental to and facilitates all academic learning. When students’ reading development lags behind that of their classmates, they are at a disadvantage not only in reading but also in writing, mathematics, and other content areas.

Early identification of dyslexia is essential so that the student not only learns to read but also understands why reading is hard so that these social and emotional difficulties can be mitigated.

Lindstrom, J.H. (2019). Dyslexia in the Schools: Assessment and Identification. Teaching Exceptional Children, Vol. 51, No. 3, pp. 189–200. DOI: 10.1177/0040059918763712